FAST‑DIPS: Adjoint‑Free Analytic Steps and Hard‑Constrained Likelihood Correction for Diffusion‑Prior Inverse Problems

Abstract

Training-free diffusion priors provide a flexible route for solving inverse problems without retraining, but existing approaches often become slow when nonlinear forward operators require repeated derivative evaluations, inner optimization, or MCMC-style correction loops. FAST-DIPS addresses this bottleneck by replacing expensive inner procedures with a hard measurement-space feasibility constraint and an analytic step-size rule tailored to each correction step. Starting from the denoiser prediction, the method performs an adjoint-free ADMM-style likelihood correction with closed-form projection, a small number of steepest-descent updates, backtracking, and decoupled re-annealing. The framework also extends naturally to latent diffusion and introduces a one-switch pixel-to-latent hybrid schedule to balance efficiency and fidelity. Across a wide range of linear and nonlinear inverse problems, FAST-DIPS achieves competitive reconstruction quality while substantially reducing run-time, without requiring hand-crafted adjoints or inner MCMC.

Method

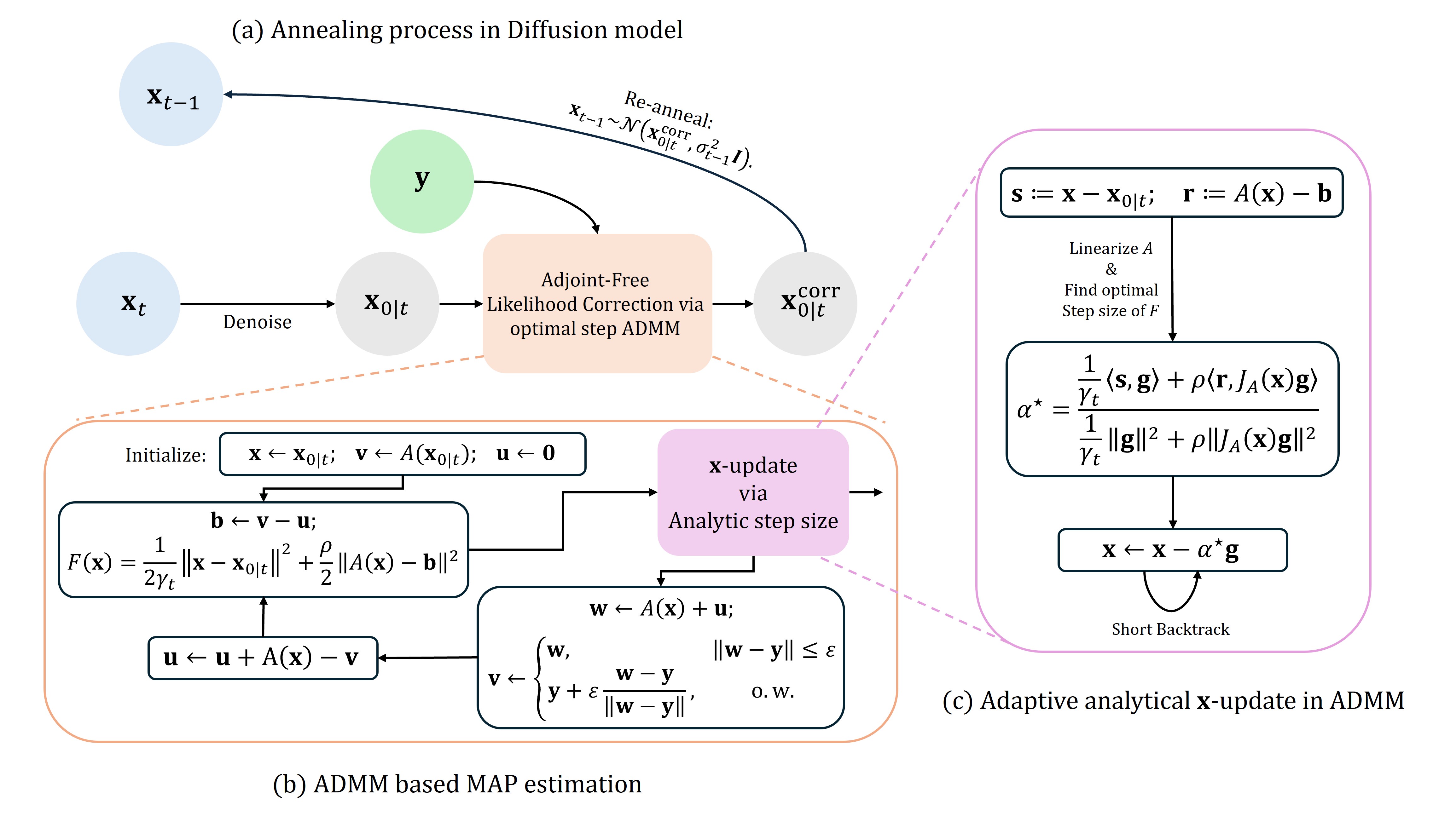

At each diffusion step, FAST-DIPS first computes a denoiser anchor, then applies a measurement-aware correction under an explicit feasibility constraint, and finally re-anneals to the next noise level. The correction is solved with an ADMM-style splitting procedure: the measurement variable is updated by closed-form projection, while the image variable is refined using an analytic step size derived from a local quadratic model. This design removes the need for hand-coded adjoints, avoids long inner correction loops, and keeps the per-step computation lightweight. In addition, the latent extension and pixel-to-latent hybrid schedule reduce unnecessary decoder-side overhead while preserving manifold-consistent reconstructions in later stages.

Experimental Results

Quantitative Evaluation

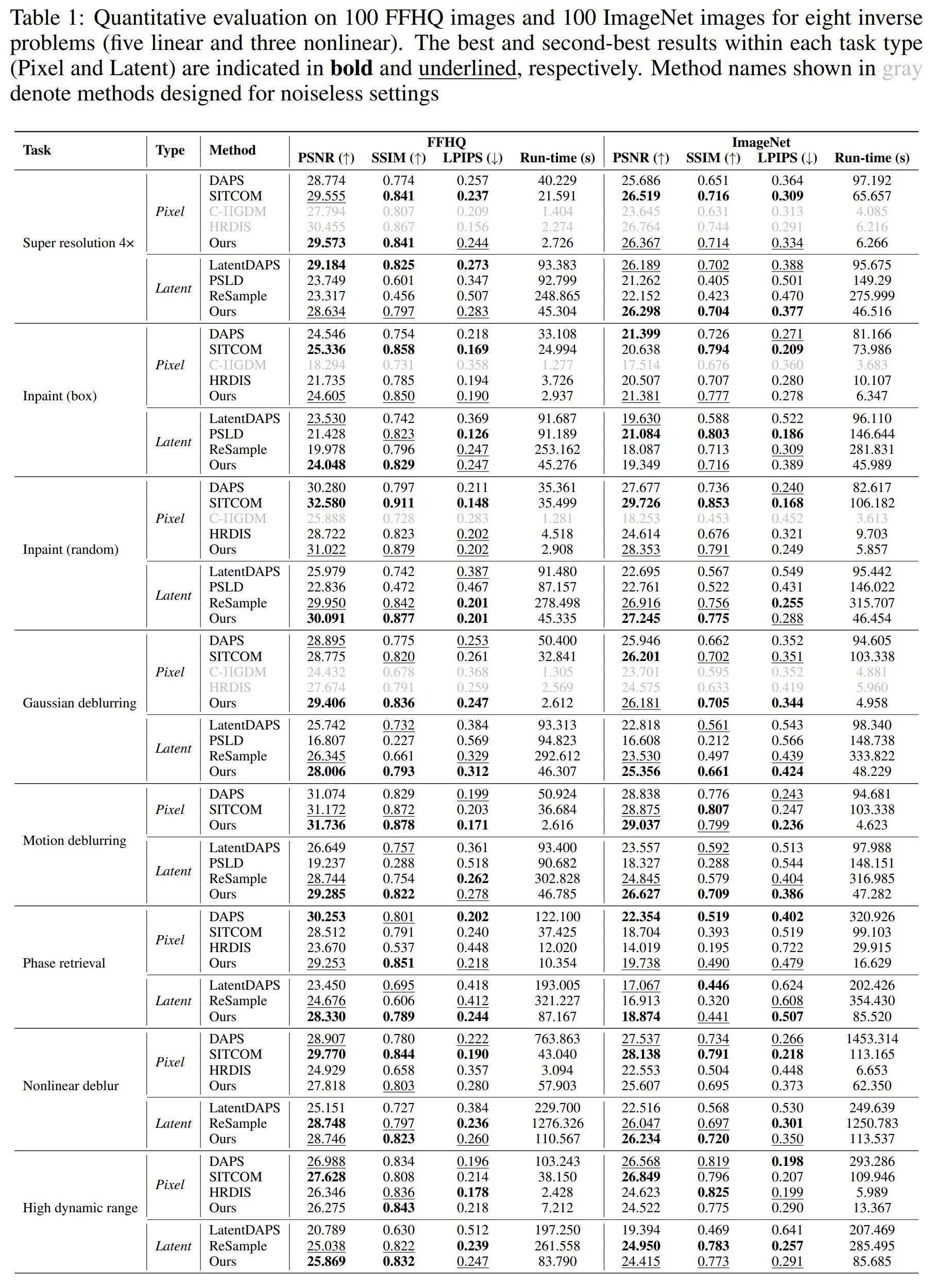

We evaluate FAST-DIPS on 100 FFHQ images and 100 ImageNet images across eight inverse problems, including both linear and nonlinear settings. The experiments report PSNR, SSIM, LPIPS, and run-time for pixel-space and latent-space variants, comparing against recent diffusion-based baselines. Overall, FAST-DIPS delivers competitive or better reconstruction quality while dramatically reducing computation, with especially strong efficiency gains in deblurring and phase retrieval tasks.

Runtime–Quality Tradeoff

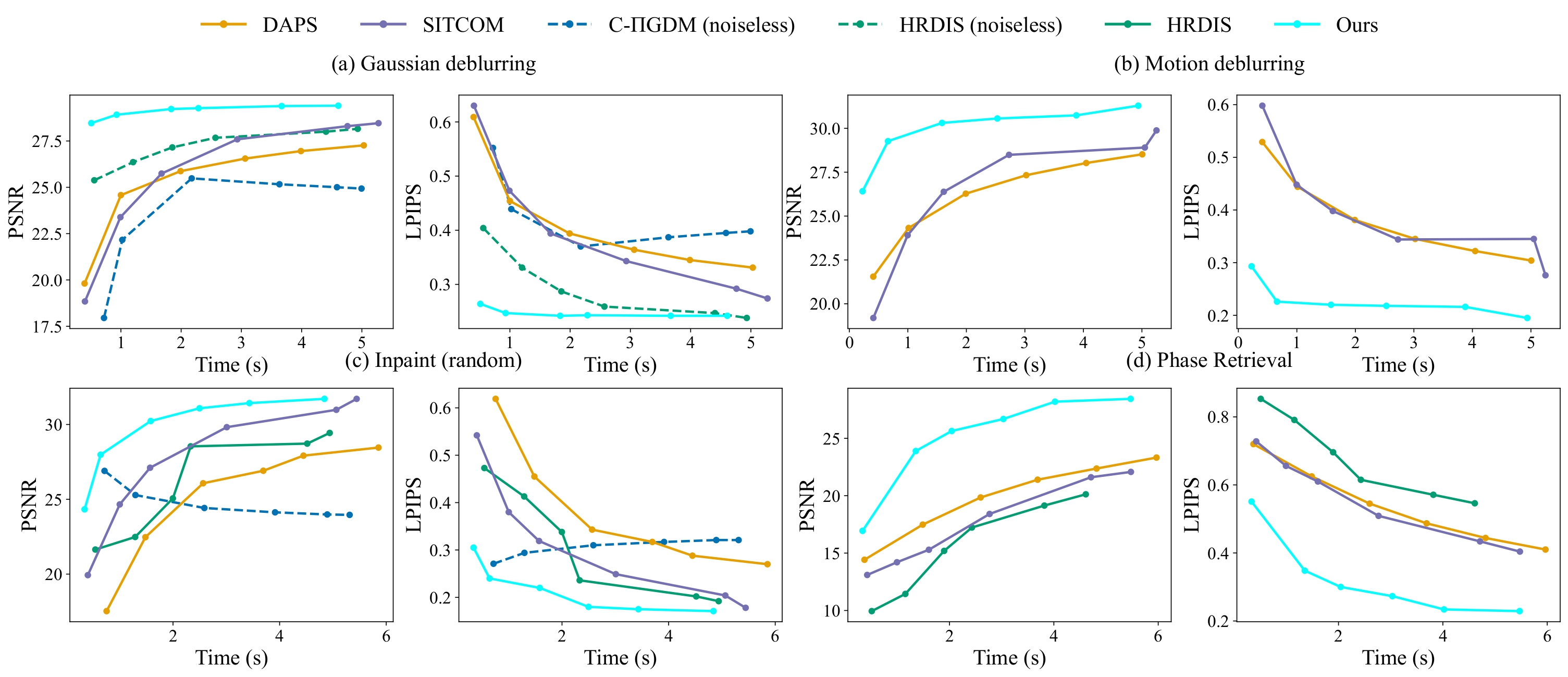

To make the speed advantage explicit, we also compare reconstruction quality against average per-image run-time under matched compute budgets. The trend is consistent across Gaussian deblurring, motion deblurring, random inpainting, and phase retrieval: FAST-DIPS improves steadily as more compute is allocated, while maintaining a clear advantage over competing methods in the practical low-to-mid run-time regime.

Qualitative Results

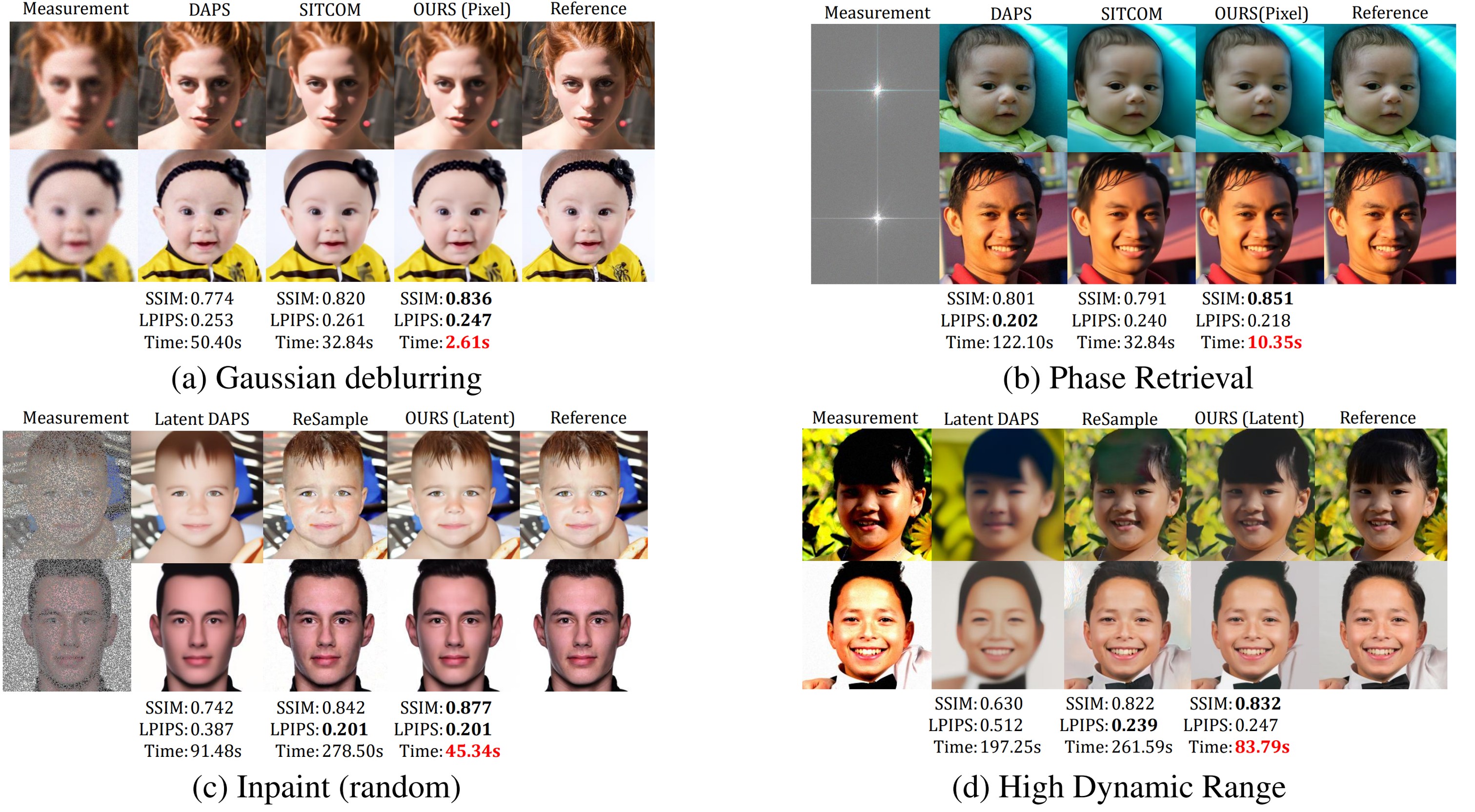

The visual comparisons below highlight the behavior of FAST-DIPS across representative inverse problems. Even in challenging settings such as phase retrieval, random inpainting, and high dynamic range reconstruction, the method recovers perceptually convincing results while preserving strong structural consistency.

BibTeX

@inproceedings{kim2026fastdips,

author = {Minwoo Kim and Seunghyeok Shin and Hongki Lim},

title = {FAST-DIPS: Adjoint-Free Analytic Steps and Hard-Constrained Likelihood Correction for Diffusion-Prior Inverse Problems},

booktitle = {International Conference on Learning Representations (ICLR)},

year = {2026}

}